Dask Summit Gathering together

By Mike McCarty (Capital One Center for Machine Learning) and Matthew Rocklin (Coiled Computing)

In late February members of the Dask community gathered together in Washington, DC. This was a mix of open source project maintainers and active users from a broad range of institutions. This post shares a summary of what happened at this workshop, including slides, images, and lessons learned.

Note: this event happened just before the widespread effects of the COVID-19 outbreak in the US and Europe. We were glad to see each other, but wouldn’t recommend doing this today.

Who came?

This was an invite-only event of fifty people, with a cap of three people per organization. We intentionally invited an even mix of half people who self-identified as open source maintainers, and half people who identified as institutional users. We had attendees from academia, small startups, tech companies, government institutions, and large enterprise. It was surprising how much we all had in common. We had attendees from the following companies:

- Anaconda

- Berkeley Institute for Datascience

- Blue Yonder

- Brookhaven National Lab

- Capital One

- Chan Zuckerberg Initiative

- Coiled Computing

- Columbia University

- D. E. Shaw & Co.

- Flatiron Health

- Howard Hughes Medial Institute, Janelia Research Campus

- Inria

- Kitware

- Lawrence Berkeley National Lab

- Los Alamos National Laboratory

- MetroStar Systems

- Microsoft

- NIMH

- NVIDIA

- National Center for Atmospheric Research (NCAR)

- National Energy Research Scientific Computing (NERSC) Center

- Prefect

- Quansight

- Related Sciences

- Saturn Cloud

- Smithsonian Institution

- SymphonyRM

- The HDF Group

- USGS

- Ursa Labs

Objectives

The Dask community comes from a broad range of backgrounds. It’s an odd bunch, all solving very different problems, but all with a surprisingly common set of needs. We’ve all known each other on GitHub for several years, and have a long shared history, but many of us had never met in person.

In hindsight, this workshop served two main purposes:

- It helped us to see that we were all struggling with the same problems and so helped to form direction and motivate future work

- It helped us to create social bonds and collaborations that help us manage the day to day challenges of building and maintaining community software across organizations

Structure

We met for three days.

On days 1-2 we started with quick talks from the attendees and followed with afternoon working sessions.

Talks were short around 10-15 minutes (having only experts in the room meant that we could easily skip the introductory material) and always had the same structure:

-

A brief description of the domain that they’re in and why it’s important

Example: We look at seismic readings from thousand of measurement devices around the world to understand and predict catastrophic earthquakes

-

How they use Dask to solve this problem

Example: this means that we need to cross-correlate thousands of very long timeseries. We use Xarray on AWS with some custom operations.

-

What is wrong with Dask, and what they would like to see improved

Example: It turns out that our axes labels can grow larger than what Xarray was designed for. Also, the task graph size for Dask can become a limitation

These talks were structured into six sections:

- Workflow and pipelines

- Deployment

- Imaging

- General data analysis

- Performance and tooling

- Xarray

We didn’t capture video, but we do have slides from each of the talks below.

1: Workflow and Pipelines

Blue Yonder

- Title: ETL Pipelines for Machine Learning

- Presenters: Florian Jetter

- Also attending:

- Nefta Kanilmaz

- Lucas Rademaker

Prefect

- Title: Prefect + Dask: Parallel / Distributed Workflows

- Presenters: Chris White, CTO

Dask + Prefect from Chris White </div>

SymphonyRM

- Title: Dask and Prefect for Data Science in Healthcare

- Presenter: Joe Schmid, CTO

2: Deployment

Quansight

- Title: Building Cloud-based Data Science Platforms with Dask

- Presenters: Dharhas Pothina

- Also attending: - James Bourbeau - Dhavide Aruliah

NVIDIA and Microsoft/Azure

- Title: Native Cloud Deployment with Dask-Cloudprovider

- Presenters: Jacob Tomlinson, Tom Drabas, and Code Peterson

Inria

- Title: HPC Deployments with Dask-Jobqueue

- Presenters: Loïc Esteve

Anaconda

- Title: Dask Gateway

- Presenters: Jim Crist

- Also attending: - Tom Augspurger - Eric Dill - Jonathan Helmus

3: Imaging

Kitware

- Title: Scientific Image Analysis and Visualization with ITK

- Presenters: Matt McCormick

Kitware

- Title: Image processing with X-rays and electrons

- Presenters: Marcus Hanwell

National Institutes of Mental Health

- Title: Brain imaging

- Presenters: John Lee

Janelia / Howard Hughes Medical Institute

- Title: Spark, Dask, and FlyEM HPC

- Presenters: Stuart Berg

4: General Data Analysis

Brookhaven National Labs

- Title: Dask at DOE Light Sources

- Presenters: Dan Allan

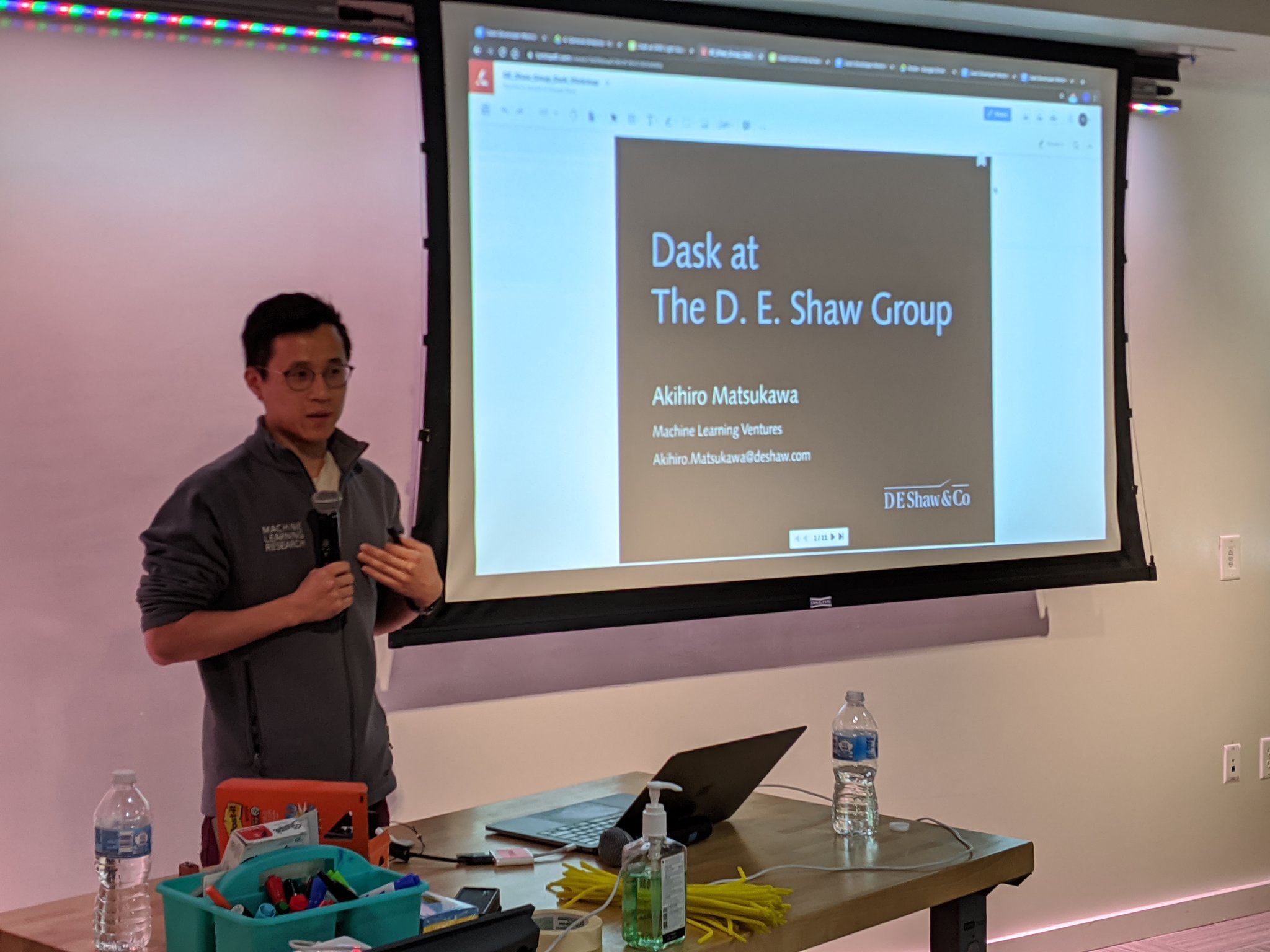

D.E. Shaw Group

- Title: Dask at D.E. Shaw

- Presenters: Akihiro Matsukawa

Anaconda

- Title: Dask Dataframes and Dask-ML summary

- Presenters: Tom Augspurger

5: Performance and Tooling

Berkeley Institute for Data Science

- Title: Numpy APIs

- Presenters: Sebastian Berg

Ursa Labs

- Title: Arrow

- Presenters: Joris Van den Bossche

NVIDIA

- Title: RAPIDS

- Presenters: Keith Kraus

- Also attending: - Mike Beaumont - Richard Zamora

NVIDIA

- Title: UCX

- Presenters: Ben Zaitlen

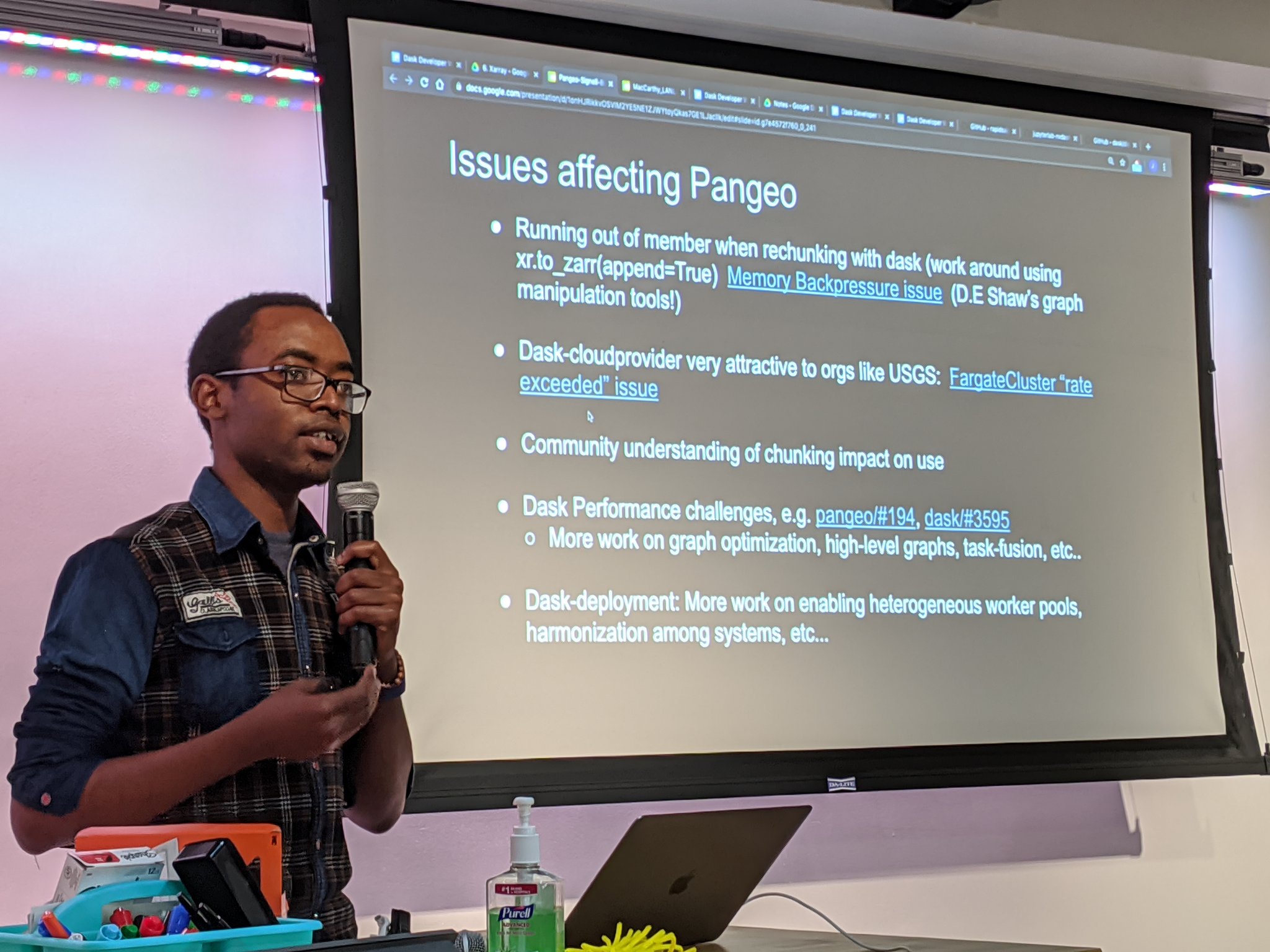

6: Xarray

USGS and NCAR

- Title: Dask in Pangeo

- Presenters: Rich Signell and Anderson Banihirwe

LBNL

- Title: Accelerating Experimental Science with Dask

- Presenters: Matt Henderson

- Slides - Fill too large to preview

LANL

- Title: Seismic Analysis

- Presenters: Jonathan MacCarthy

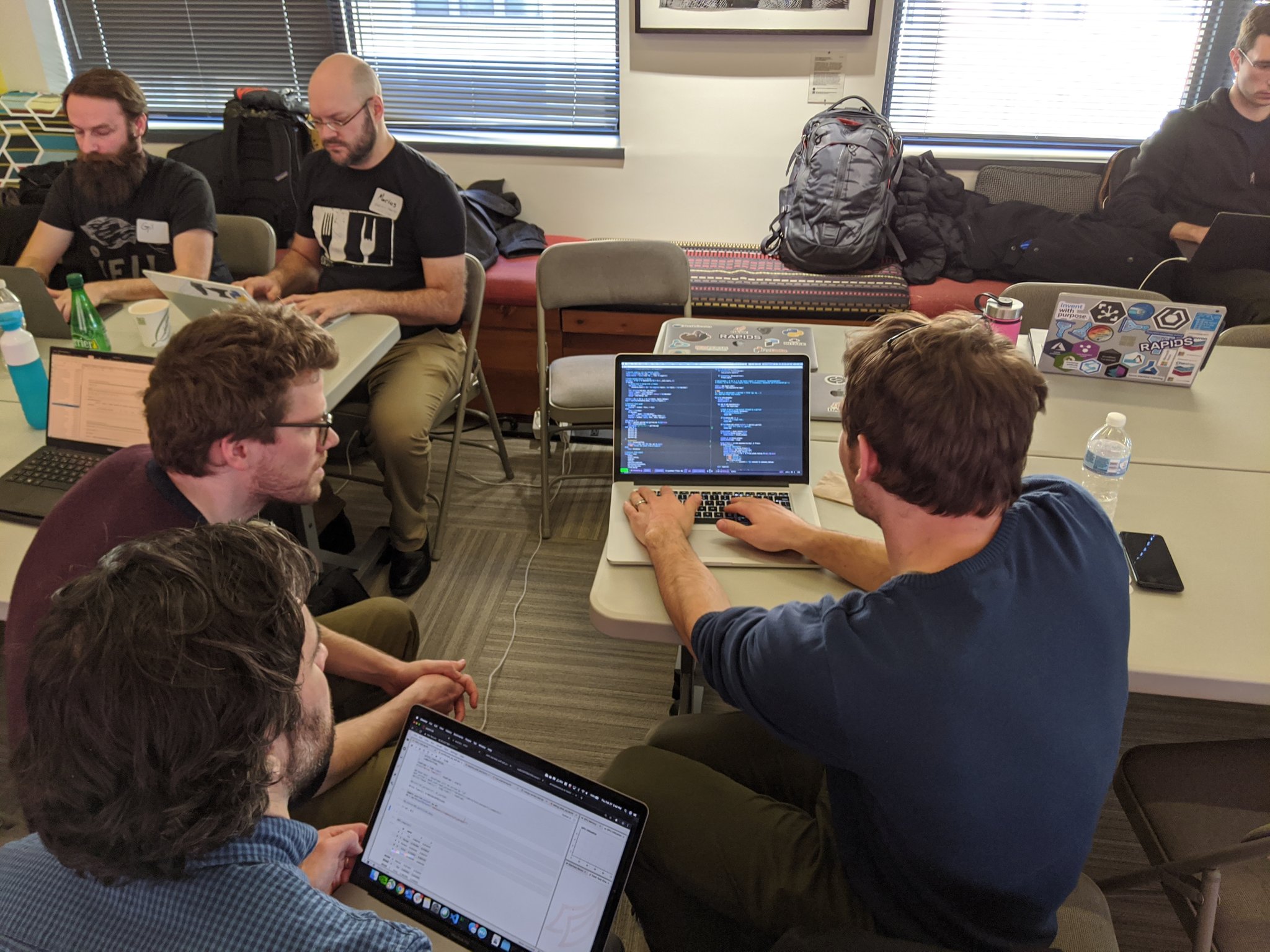

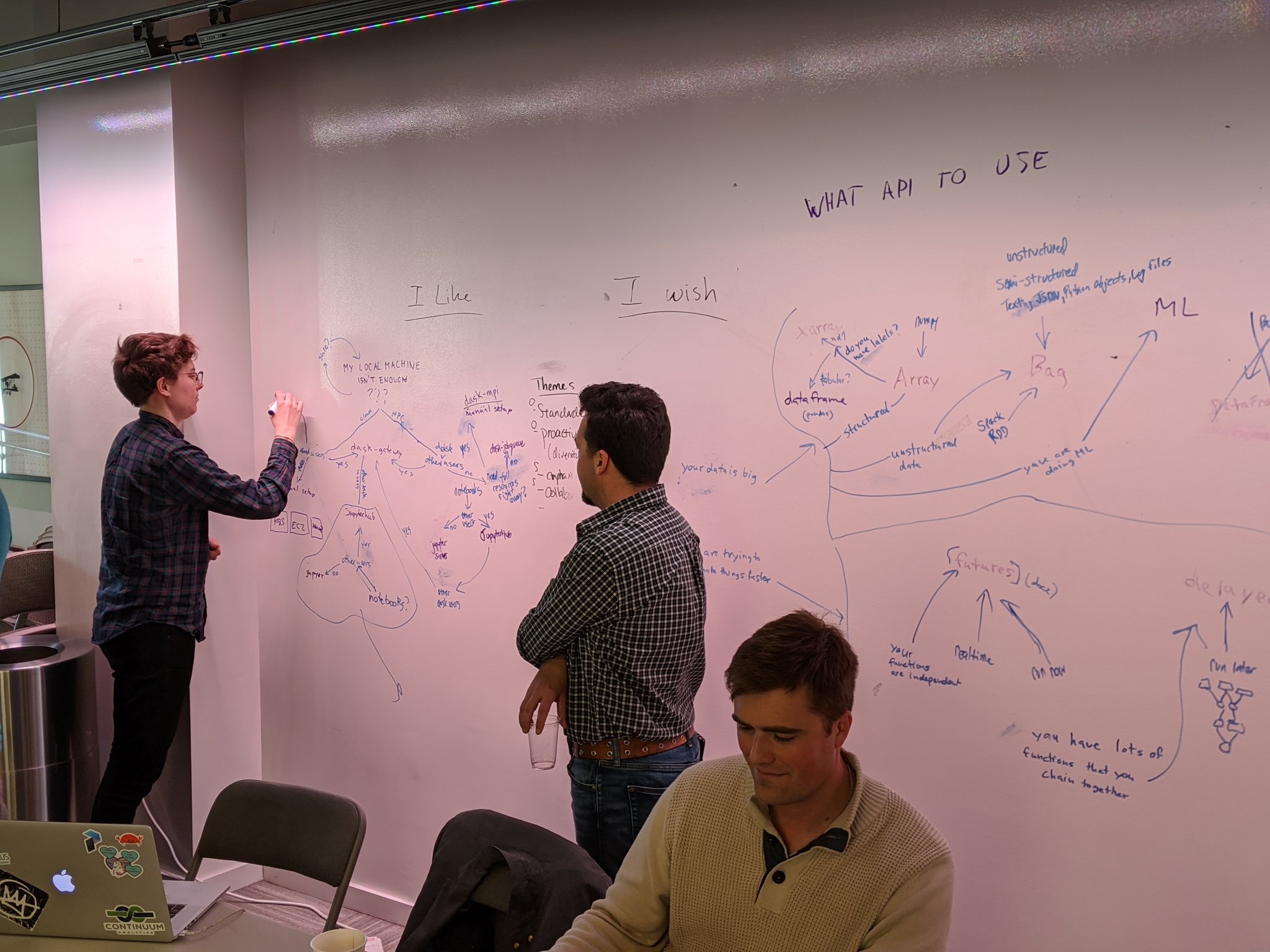

Unstructured Time

Having rapid fire talks in the morning, followed by unstructured time in the afternoon was a productive combination. Below you’ll see pictures from geo-scientists and quants talking about the same challenges, and library maintainers from Pandas/Arrow/RAPDIS/Dask all working together on joint solutions.

This unstructured time is a productive combination that we would recommend to other technically diverse groups in the future. Engagement and productivity was really high throughout the workshop.

Final Thoughts

Dask’s strength comes from this broad community of stakeholders.

An early technical focus on simplicity and pragmatism allowed the project to be quickly adopted within many different domains. As a result, the practitioners within these domains are largely the ones driving the project forward today. This Community Driven Development brings an incredible diversity of technical and cultural challenges and experience that force the project to quickly evolve in a way that is constrained towards pragmatism.

There is still plenty of work to do. Short term this workshop brought up many technical challenges that are shared by all (simpler deployments, scheduling under task constraints, active memory management). Longer term we need to welcome more people into this community, both by increasing the diversity of domains, and the diversity of individuals (the vast majority of attendees were white men in their thirties from the US and western Europe).

We’re in a good position to effect this change. Dask’s recent growth has captured the attention of many different institutions. Now is a critical time to be intentional about the projects growth to make sure that the project and community continue to reflect a broad and ethical set of principles.

Acknowledgements

Sponsors

Without the support of our sponsors, this workshop would not have been possible. Thanks to Anaconda, Capital One and NVIDIA for their support and generous donations toward this event.

Organizers

Thank you very much to the organizers who took time from their busy schedules and worked so hard to put together this event.

- Brittany Treadway (Capital One)

- Keith Kraus (NVIDIA)

- Matthew Rocklin (Coiled Computing)

- Mike Beaumont (NVIDIA)

- Mike McCarty (Capital One)

- Neia Woodson (Capital One)

- Jake Schmitt (Capital One)

- Jim Crist (Anaconda)

blog comments powered by Disqus